Jumbo Frames for Jumbo Sound?

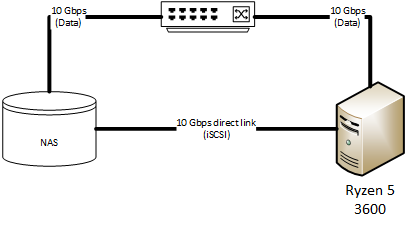

A curiosity happened in late September 2019. This was when I added the Ryzen 5 as the Snakeoil development server. The network between my NAS and the Ryzen are changed as such:

In an attempt to simplify my home setup. I have decided to purchase 2 dual port 10Gbps NIC - one for my NAS, and the other for the new Ryzen. These network cards will be connected at 10 Gbps to my main switch, and also a direct connection between the NAS and the server itself (dedicated iSCSI connection).

iSCSI works like a SATA cable, but it's connected via normal networking equipment rather than between your SSD and motherboard. Now for some reason that still eludes me, the iSCSI data just refuse to work properly with Jumbo Frames on.

So in an attempt to troubleshoot the problem, I have disabled Jumbo Frames throughout my entire network. Unfortunately with a MTU of 1500, the sound I get from my Hifi just do not sound right.

What Went Wrong?

Simply put, my emotional connection to the music is lost.

The sound just do not excite me any more. The clarity is still there, for example I can still the metronome sound in this track, but I'm missing a lot of other stuffs:

- The harmonics seems to be cut short. Every pluck of a guitar, or the notes of a violin, they feel "short" and "fat". And the notes do not fade off naturally.

- Voice of vocals seem to be drier. While the clarity is there, the people do not sound natural or realistic.

- I just cannot feel the room any more. The 3D sound stage I am so used to is gone. Although I can still localise the sounds coming from a recording, that localisation is now on a flat vertical 2D plane. If something is behind the speakers, everything is behind the speakers. If something is forward, everything is forward.

- The sound stage is a lot narrower. The music does not fill the room like it can before.

Uninspiring.

Goes without saying I spent the days retracing the steps. And turns out, it's Jumbo Frames. So stop using Jumbo Frames causes my Hifi system to sound worse.

What Are Jumbo Frames?

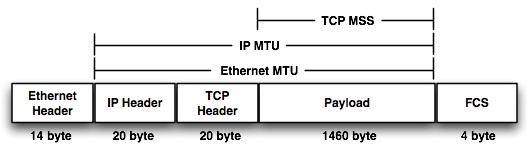

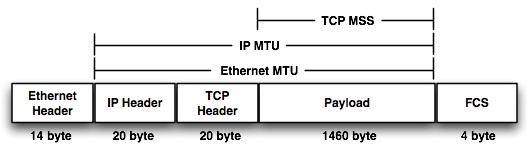

The size of a network packet/frame is called the Maximum Transmission Unit (MTU). The standard size is 1500 bytes. Here is the breakdown of a TCP network packet.

Any number bigger than 1500 is called a Jumbo Frame. The IP Header and TCP Header sizes are fixed. Thus when you are using Jumbo Frames (say 9000 bytes), you have effectively increased the amount of bytes you can place in the payload. In other words you have increased the amount of data you can move over the network per packet. The payload part contains the actual data of the network transmission. So in the context of audio streaming from the NAS to your PC, the payload contains the music for your streaming PC.

As a very simple example, say you are streaming a short length of music that is 4500 bytes long. On a standard network, the system will break this data down into 4 network packets and send it to the network:

- First frame has a payload of 1480 bytes

- Second frame has a payload of 1480 bytes

- Third frame with a payload of 1480 bytes

- Last frame with a payload of 60 bytes.

The frames do not always sends up in your streaming PC in the correct, sequential order (e.g. the last frame may be received by your PC first). The network layer on your streaming P C will examine the information kept in the TCP header and re-construct the data into the original.

On a Jumbo frame enabled network. Only 1 packet is sent.

Working on small datasets. A MTU of 1500 might make sense. But if you scale up your network data from Kilobytes to Megabytes or Gigabytes, having larger frame sizes may be beneficial.

Pick Your Poison

Sending larger frames over the network is not always better. There are pros and cons, things to consider. Reasons why 1500 was determined to be a good general size for a general network.

Reduce error retransmissions

Every time your streaming PC receives a network frame. The network card (or PC) will check the integrity of the received data. If error correction is enabled your NAS will need to re-transmit the same data again if there is an error. On a connection that is slow, limited bandwidth or highly error prone, a large MTU on an unreliable network is undesirable as there will be more re-transmissions.

This is the reason why your Internet connection (WAN) often have a MTU that's lower than 1500 bytes.

Balancing Act

The network interface will signal to the CPU there is data ready to sent or receive. This is a very expensive operation as the network is significantly slower than the CPU. Adjusting the MTU to correct size will reduce the number of network ⇌ CPU interactions.

Set the MTU too low will increase the number of packets on the network. The CPU will also be more busy processing as there will be more packets sent over the network. The efficiency drops as each packet contains smaller chunks of data.

Set the MTU too high will increase the efficiency of the CPU. There may well be less packets "flying" across the network, but each packet are now much bigger in size. This may result in network congestion. Additionally, with error correction on, if a frame has error, the entire frame will have to be re-transmitted, adding unnecessary load to the network.

Checksum Collision

The effectiveness of the checksum decreases as the payload gets bigger. A checksum is like a short summary of a bigger text. In this case we are trying to summarise the standard 1480 bytes into 4 bytes. We do not know what the original 1480 bytes are based on the 4 bytes, but we know the same 1480 bytes will always result in the same 4 byte checksum.

As you increase the payload, the effectiveness of the checksum decreases as you increases the likelihood that different blocks of data will summarise to the same 4 blocks. In other words, the following scenario becomes more likely:

- NAS sends a frame of 9000 bytes

- The NAS network calculates the checksum and append it to the frame

- Data is sent over the network. And there is an error in the transmission and some bytes were not received correctly by the streaming PC

- The streaming PC looks at the incorrect block of received 9000 bytes. It calculates the checksum and validate it against the checksum sent in the frame

- The checksum matches (incorrectly) but the streaming PC has no way of knowing that

- Thus the bad data is sent further up the food chain.

The size of 1500 was construed to balance the the needs and consideration of all the factors above. i.e. 1500 is thought to be the sweet spot for everything to flow smoothly.

Why Use Jumbo Frames

To be fair there is no real reason to. So why use Jumbo frames then? Simply put, my Hi-fi sounds better with Jumbo Frames.

1500 is a good frame size that stood the test of time. But modern computers, network and even the Ethernet cables themselves are significantly better now. Reliability has improved. Think of the situation where the road limit is 40 Km/h, but every driver on the road is driving a Ferrari and holds a F1 license.

The 40 Km/h limit was devised at a time when roads were of poor quality, visibility is low, brakes are less efficient. Modern infrastructure, cars have improved over the decades, it may be safe to increase the speed limit.

Or not - drivers have not really improved.

The problem with Jumbo Frames is it is not an official specification. Every network device maker has their own idea what Jumbo frame is. Running multiple network equipment from various manufacturers on the same network will make it worse.

Jumbo Frames is an example where a mis-configuration will screw things up.

Why? Because it's not really a standard.

- Everybody knows a Jumbo Frame is any MTU that's bigger than 1500 (lower limit). There is no official statement what the maximum MTU size could be.

- The size of 9000 is not strictly followed by all network manufacturers. Some network cards can only jumbo frames of 4000 bytes. Other network devices simply do not work with Jumbo frames. Most Android devices will freeze if you use jumbo frames. Raspberry Pi also do not support Jumbo Frames at all.

- Different network manufacturers assuming different things when you set the size. For most makers, the size excludes the Ethernet header and checksum (FCS). Others includes it, so depending on the maker, you have to specify a size of 9018 instead of 9000. If you are using VLANs, that size increases by another 4 bytes (9022). Get this wrong and you break inter-operation between your networking devices.

Mixing and matching different sized network frames on the same networking gear is a definite recipe for disaster. You'll lose network connectivity to a particular device if anything goes wrong. You may even bring down the entire network in the worse case.

My advice is, do not attempt this. But then again, it sounds so good! Pick your poison - if you want to, go ahead and read on.

When Not To Use Jumbo Frames

I'll start by saying when you don't need Jumbo Frames.

- If you store all your music files on the Snakeoil computer. You don't need Jumbo Frames

- If you have no idea how networking works. Probably not a good idea to attempt this tweak until you're more familiar, or find somebody who do

- If you're using Raspberry Pi as your music streamer. As of this writing Jumbo Frames are not supported so it's pointless to set this up. You would slow down the network with Jumbo Frames on

- If you're using switches that do not support Jumbo Frames. Setting this up will kill network connectivity

- If your NAS and streaming PC are not using Intel network cards

When To Use Jumbo Frames

Use Jumbo Frames if you think you satisfy the following two conditions:

- You want to keep all your music library on a NAS

- You want nothing but the best, subject to condition 1.

Condition 2 is a bit contradictory because in our early experimentation, music stored locally on a SSD appear to sound better, hence the qualifier. But for some, having music stored locally is just not a good option.

For example in my case I have setup a Plex server so that I can stream music off the NAS when I am out driving (Android Carplay). Having a central music repository is the best solution as it balanced all my needs.

In my next article, I will setup the minimum equipment you'll need for a Jumbo Frame enabled network. The article will also detail the steps you need to take to enable Jumbo Frames, and some basic diagnostics to ensure everything is working.

Bear in mind what works for me, doesn't necessarily works for you. I am happy and glad that I found that emotional connection again once I turned Jumbo Frames back on. But that does not mean you can reap the same benefits I did.

All you can do is attempt to recreate what I did, and hope you get similar results. If it works, you have a better system. If it doesn't, well, congratulations! You have just wasted time and money for no gain!

Comments

"In my next article, I will…

"In my next article, I will setup the minimum equipment you’ll need for a Jumbo Frame enabled network. The article will also detail the steps you need to take to enable Jumbo Frames, and some basic diagnostics to ensure everything is working."

Yes! I wait this article with an high interest, we are at the beginning of local network optimization. Do you have a look to http://fasterdata.es.net/network-tuning/udp-tuning/?

Add new comment